http://antarvasnavideos.pro sex videos kinky babe fisting her large pussy. https://bigboobslovers.net/ hungry mom loves throat fucking.

Special Issue on Advances in River Monitoring

20 August 2022

8th Galileo Conference

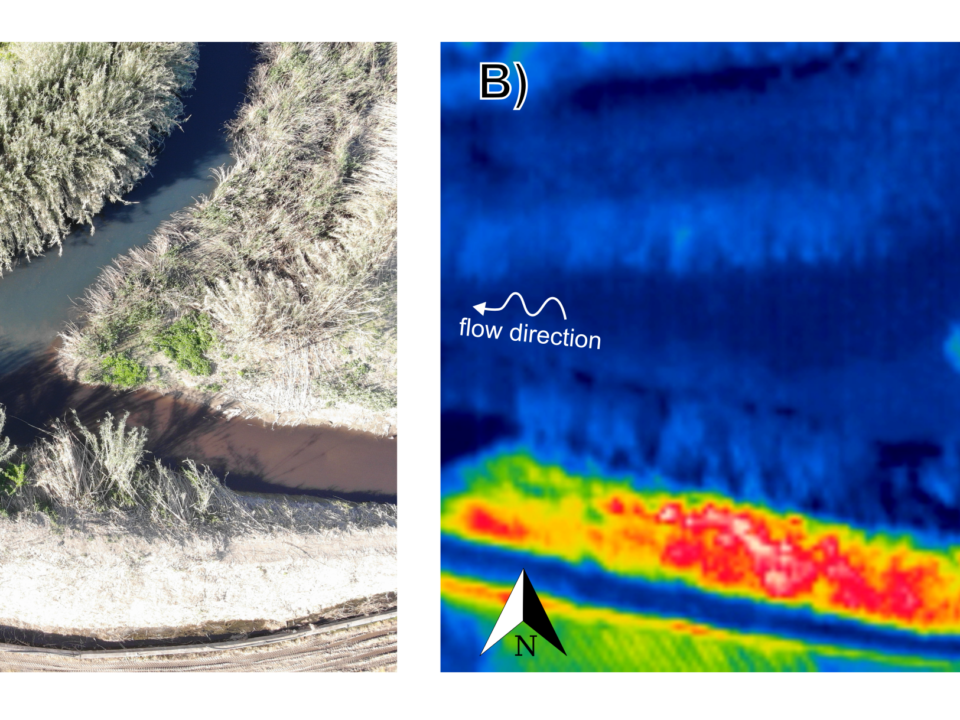

27 October 2022The ash produced by forest fires is a complex mixture of organic and inorganic particles with many properties. Amounts of ash and char are used to roughly evaluate the impacts of a fire on nutrient cycling and ecosystem recovery. Numerous studies have suggested that fire severity can be assessed by measuring changes in ash characteristics. Traditional methods to determine fire severity are based on in situ observations, and visual approximation of changes in the forest floor and soil which are both laborious and subjective. These measures primarily reflect the level of consumption of organic layers, the deposition of ash, particularly its depth and color, and fire-induced changes in the soil. Recent studies suggested adding remote sensing techniques to the field observations and using machine learning and spectral indices to assess the effects of fires on ecosystems. While index thresholding can be easily implemented, its effectiveness over large areas is limited to pattern coverage of forest type and fire regimes. Machine learning algorithms, on the other hand, allow multivariate classifications, but learning is complex and time-consuming when analyzing space-time series. Therefore, there is currently no consensus regarding a quantitative index of fire severity. Considering that wildfires play a major role in controlling forest carbon storage and cycling in fire suppressed forests, this study examines the use of low-cost multispectral imagery across visible and near-infrared regions collected by unmanned aerial systems to determine fire severity according to the color and chemical properties of vegetation ash. The use of multispectral imagery data might reduce the lack of precision that is part of manual color matching and produce a vast and accurate spatio-temporal severity map. The suggested severity map is based on spectral information used to evaluate chemical/mineralogical changes by deep learning algorithms. These methods quantify total carbon content and assess the corresponding fire intensity that is required to form a particular residue. By designing three learning algorithms (PLS-DA, ANN, and 1-D CNN) for two datasets (RGB images and Munsell color versus Unmanned Aerial System (UAS)-based multispectral imagery) the multispectral prediction results were excellent. Therefore, deep network-based near-infrared remote sensing technology has the potential to become an alternative reliable method to assess fire severity.

How to cite: Brook, A.; Hamzi, S.; Roberts, D.; Ichoku, C.; Shtober-Zisu, N.; Wittenberg, L. Total Carbon Content Assessed by UAS Near-Infrared Imagery as a New Fire Severity Metric. Remote Sens. 2022, 14, 3632. https://doi.org/10.3390/rs14153632

website

big black teen apparel theft.http://desivideos4k.com/ cassandra nix and eva karera threesome.